In a groundbreaking leap for artificial intelligence (AI) in mathematics, researchers have unveiled AlphaGeometry, an AI system that outperforms state-of-the-art approaches in solving complex geometry problems at the level of an Olympiad gold-medalist. The findings, published in a paper in Nature, mark a significant advancement in AI reasoning and its application in challenging mathematical domains.

The International Mathematical Olympiad (IMO), akin to the ancient Olympic Games, serves as a global stage for the brightest high-school mathematicians. This competition not only showcases emerging talent but also provides a testing ground for cutting-edge AI systems. AlphaGeometry, introduced in the study, demonstrated its prowess by solving 25 out of 30 Olympiad geometry problems within the standard time limit, a remarkable feat compared to its predecessors.

The previous leading method, known as “Wu’s method,” managed to solve only 10 of the same set of problems. Moreover, AlphaGeometry’s performance nearly matched that of human gold medalists, who typically solved 25.9 problems on average. This breakthrough underscores the system’s capacity to reason logically and its potential to contribute to the development of advanced and general AI systems.

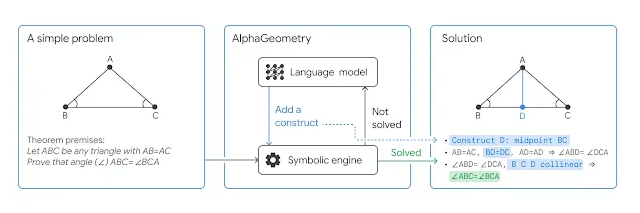

AlphaGeometry adopts a neuro-symbolic approach, combining a neural language model with a symbolic deduction engine. This collaborative effort enables the system to tackle complex geometry theorems by providing both fast, intuitive ideas and deliberate, rational decision-making. The integration of these two components results in a balanced and efficient problem-solving approach.

What sets AlphaGeometry apart is its ability to generate synthetic training data on an unprecedented scale. With a pool of 100 million unique examples, the system can learn without relying on human demonstrations, overcoming the limitations posed by data scarcity. The synthetic data generation process involves a neuro-symbolic system that mimics the human process of learning geometry, using parallelized computing to derive relationships and proofs from random geometric diagrams.

Evan Chen, a math coach and former Olympiad gold-medalist, praised AlphaGeometry’s verifiable and clean output. He highlighted that the system’s solutions are machine-verifiable, eliminating the hit-or-miss nature of past AI solutions. Despite its machine-driven precision, AlphaGeometry’s output remains human-readable, using classical geometry rules familiar to students.

While AlphaGeometry excels in geometry problem-solving, its developers have broader aspirations. The system aims to pioneer mathematical reasoning for next-generation AI systems, showcasing its potential to generalize across various mathematical fields. As the research team opens up the code and model for AlphaGeometry, they anticipate collaborative efforts that will further expand the frontiers of AI, mathematics, and science.

In the words of Ngô Bảo Châu, Fields Medalist and IMO Gold Medalist, “It makes perfect sense to me now that researchers in AI are trying their hands on the IMO geometry problems first because finding solutions for them works a little bit like chess in the sense that we have a rather small number of sensible moves at every step. But I still find it stunning that they could make it work. It’s an impressive achievement.”

Check out the official blog by Google Deepming here.

Leave a Reply